Browse by signal

Fast keyword tagging derived from titles and summaries. Expect more nuance as we add model-assisted tagging.

Top results tagged #open_source

The malware authors associated with a Phishing-as-a-Service (PhaaS) kit known as Sneaky 2FA have incorporated Browser-in-the-Browser (BitB) functionality into their arsenal, underscoring the continued evolution of such offerings and further making it easier for less-skilled threat actors to mount attacks at scale. Push Security, in a report shared with The Hacker News, said it observed the use

Anthropic released its most capable artificial intelligence model yet on Monday, slashing prices by roughly two-thirds while claiming state-of-the-art performance on software engineering tasks — a strategic move that intensifies the AI startup's competition with deep-pocketed rivals OpenAI and Google. The new model, Claude Opus 4.5 , scored higher on Anthropic's most challenging internal engineering assessment than any human job candidate in the company's history, according to materials reviewed by VentureBeat. The result underscores both the rapidly advancing capabilities of AI systems and growing questions about how the technology will reshape white-collar professions. The Amazon-backed company is pricing Claude Opus 4.5 at $5 per million input tokens and $25 per million output tokens — a dramatic reduction from the $15 and $75 rates for its predecessor, Claude Opus 4.1 , released earlier this year. The move makes frontier AI capabilities accessible to a broader swath of developers and enterprises while putting pressure on competitors to match both performance and pricing. "We want to make sure this really works for people who want to work with these models," said Alex Albert, Anthropic's head of developer relations, in an exclusive interview with VentureBeat. "That is really our focus: How can we enable Claude to be better at helping you do the things that you don't necessarily want to do in your job?" The announcement comes as Anthropic races to maintain its position in an increasingly crowded field. OpenAI recently released GPT-5.1 and a specialized coding model called Codex Max that can work autonomously for extended periods. Google unveiled Gemini 3 just last week, prompting concerns even from OpenAI about the search giant's progress, according to a recent report from The Information. Opus 4.5 demonstrates improved judgment on real-world tasks, developers say Anthropic's internal testing revealed what the company describes as a qualitative leap in Claude Opus 4.5's reasoning capabilities. The model achieved 80.9% accuracy on SWE-bench Verified , a benchmark measuring real-world software engineering tasks, outperforming OpenAI's GPT-5.1-Codex-Max (77.9%), Anthropic's own Sonnet 4.5 (77.2%), and Google's Gemini 3 Pro (76.2%), according to the company's data. The result marks a notable advance over OpenAI's current state-of-the-art model, which was released just five days earlier. But the technical benchmarks tell only part of the story. Albert said employee testers consistently reported that the model demonstrates improved judgment and intuition across diverse tasks — a shift he described as the model developing a sense of what matters in real-world contexts. "The model just kind of gets it," Albert said. "It just has developed this sort of intuition and judgment on a lot of real world things that feels qualitatively like a big jump up from past models." He pointed to his own workflow as an example. Previously, Albert said, he would ask AI models to gather information but hesitated to trust their synthesis or prioritization. With Opus 4.5, he's delegating more complete tasks, connecting it to Slack and internal documents to produce coherent summaries that match his priorities. Opus 4.5 outscores all human candidates on company's toughest engineering test The model's performance on Anthropic's internal engineering assessment marks a notable milestone. The take-home exam, designed for prospective performance engineering candidates, is meant to evaluate technical ability and judgment under time pressure within a prescribed two-hour limit. Using a technique called parallel test-time compute — which aggregates multiple attempts from the model and selects the best result — Opus 4.5 scored higher than any human candidate who has taken the test, according to company. Without a time limit, the model matched the performance of the best-ever human candidate when used within Claude Code, Anthropic's coding environment. The company acknowledged that the test doesn't measure other crucial professional skills such as collaboration, communication, or the instincts that develop over years of experience. Still, Anthropic said the result "raises questions about how AI will change engineering as a profession." Albert emphasized the significance of the finding. "I think this is kind of a sign, maybe, of what's to come around how useful these models can actually be in a work context and for our jobs," he said. "Of course, this was an engineering task, and I would say models are relatively ahead in engineering compared to other fields, but I think it's a really important signal to pay attention to." Dramatic efficiency improvements cut token usage by up to 76% on key benchmarks Beyond raw performance, Anthropic is betting that efficiency improvements will differentiate Claude Opus 4.5 in the market. The company says the model uses dramatically fewer tokens — the units of text that AI systems process — to achieve similar or better outcomes compared to predecessors. At a medium effort level, Opus 4.5 matches the previous Sonnet 4.5 model's best score on SWE-bench Verified while using 76% fewer output tokens, according to Anthropic. At the highest effort level, Opus 4.5 exceeds Sonnet 4.5 performance by 4.3 percentage points while still using 48% fewer tokens. To give developers more control, Anthropic introduced an "effort parameter" that allows users to adjust how much computational work the model applies to each task — balancing performance against latency and cost. Enterprise customers provided early validation of the efficiency claims. "Opus 4.5 beats Sonnet 4.5 and competition on our internal benchmarks, using fewer tokens to solve the same problems," said Michele Catasta, president of Replit, a cloud-based coding platform, in a statement to VentureBeat. "At scale, that efficiency compounds." GitHub's chief product officer, Mario Rodriguez, said early testing shows Opus 4.5 "surpasses internal coding benchmarks while cutting token usage in half, and is especially well-suited for tasks like code migration and code refactoring." Early customers report AI agents that learn from experience and refine their own skills One of the most striking capabilities demonstrated by early customers involves what Anthropic calls "self-improving agents" — AI systems that can refine their own performance through iterative learning. Rakuten , the Japanese e-commerce and internet company, tested Claude Opus 4.5 on automation of office tasks. "Our agents were able to autonomously refine their own capabilities — achieving peak performance in 4 iterations while other models couldn't match that quality after 10," said Yusuke Kaji, Rakuten's general manager of AI for business. Albert explained that the model isn't updating its own weights — the fundamental parameters that define an AI system's behavior — but rather iteratively improving the tools and approaches it uses to solve problems. "It was iteratively refining a skill for a task and seeing that it's trying to optimize the skill to get better performance so it could accomplish this task," he said. The capability extends beyond coding. Albert said Anthropic has observed significant improvements in creating professional documents, spreadsheets, and presentations. "They're saying that this has been the biggest jump they've seen between model generations," Albert said. "So going even from Sonnet 4.5 to Opus 4.5, bigger jump than any two models back to back in the past." Fundamental Research Labs , a financial modeling firm, reported that "accuracy on our internal evals improved 20%, efficiency rose 15%, and complex tasks that once seemed out of reach became achievable," according to co-founder Nico Christie. New features target Excel users, Chrome workflows and eliminate chat length limits Alongside the model release, Anthropic rolled out a suite of product updates aimed at enterprise users. Claude for Excel became generally available for Max, Team, and Enterprise users with new support for pivot tables, charts, and file uploads. The Chrome browser extension is now available to all Max users. Perhaps most significantly, Anthropic introduced " infinite chats " — a feature that eliminates context window limitations by automatically summarizing earlier parts of conversations as they grow longer. "Within Claude AI, within the product itself, you effectively get this kind of infinite context window due to the compaction, plus some memory things that we're doing," Albert explained. For developers, Anthropic released "programmatic tool calling," which allows Claude to write and execute code that invokes functions directly. Claude Code gained an updated "Plan Mode" and became available on desktop in research preview, enabling developers to run multiple AI agent sessions in parallel. Market heats up as OpenAI, Google race to match performance and pricing Anthropic reached $2 billion in annualized revenue during the first quarter of 2025, more than doubling from $1 billion in the prior period. The number of customers spending more than $100,000 annually jumped eightfold year-over-year. The rapid release of Opus 4.5 — just weeks after Haiku 4.5 in October and Sonnet 4.5 in September — reflects broader industry dynamics. OpenAI released multiple GPT-5 variants throughout 2025, including a specialized Codex Max model in November that can work autonomously for up to 24 hours. Google shipped Gemini 3 in mid-November after months of development. Albert attributed Anthropic's accelerated pace partly to using Claude to speed its own development. "We're seeing a lot of assistance and speed-up by Claude itself, whether it's on the actual product building side or on the model research side," he said. The pricing reduction for Opus 4.5 could pressure margins while potentially expanding the addressable market. "I'm expecting to see a lot of startups start to incorporate this into their products much more and feature it prominently," Albert said. Yet profitability remains elusive for leading AI labs as they invest heavily in computing infrastructure and research talent. The AI market is projected to top $1 trillion in revenue within a decade, but no single provider has established dominant market position—even as models reach a threshold where they can meaningfully automate complex knowledge work. Michael Truell, CEO of Cursor, an AI-powered code editor, called Opus 4.5 "a notable improvement over the prior Claude models inside Cursor, with improved pricing and intelligence on difficult coding tasks." Scott Wu, CEO of Cognition, an AI coding startup, said the model delivers "stronger results on our hardest evaluations and consistent performance through 30-minute autonomous coding sessions." For enterprises and developers, the competition translates to rapidly improving capabilities at falling prices. But as AI performance on technical tasks approaches—and sometimes exceeds—human expert levels, the technology's impact on professional work becomes less theoretical. When asked about the engineering exam results and what they signal about AI's trajectory, Albert was direct: "I think it's a really important signal to pay attention to."

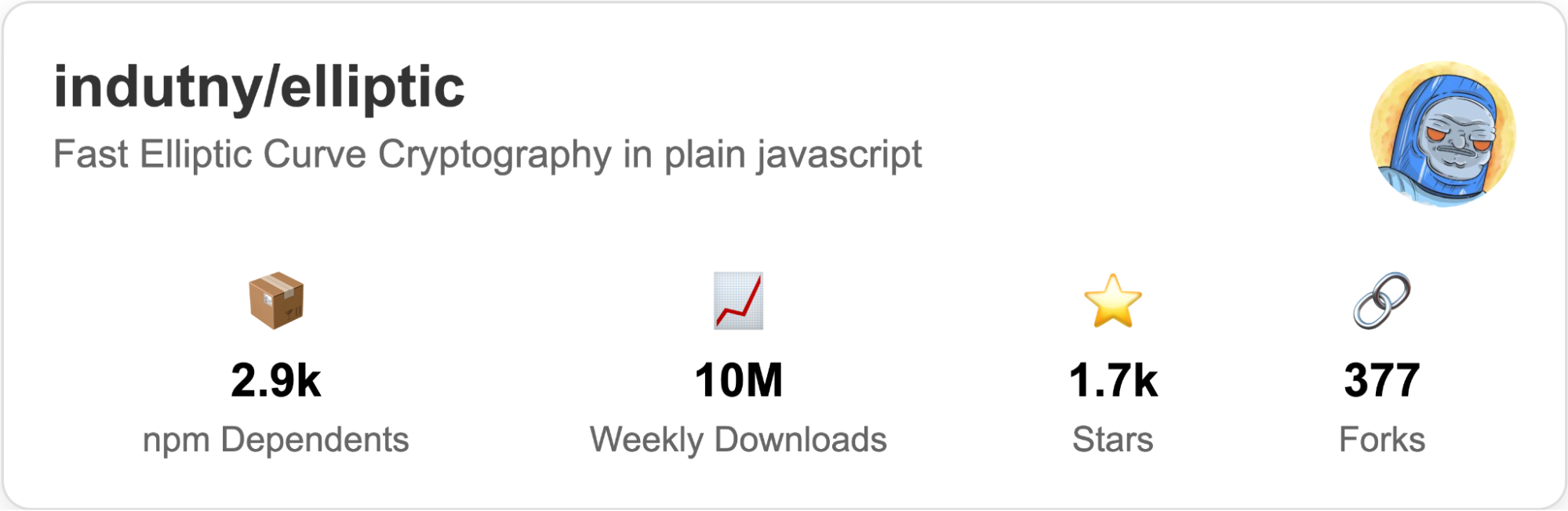

Trail of Bits is publicly disclosing two vulnerabilities in elliptic , a widely used JavaScript library for elliptic curve cryptography that is downloaded over 10 million times weekly and is used by close to 3,000 projects. These vulnerabilities, caused by missing modular reductions and a missing length check, could allow attackers to forge signatures or prevent valid signatures from being verified, respectively. One vulnerability is still not fixed after a 90-day disclosure window that ended in October 2024. It remains unaddressed as of this publication. I discovered these vulnerabilities using Wycheproof , a collection of test vectors designed to test various cryptographic algorithms against known vulnerabilities. If you’d like to learn more about how to use Wycheproof, check out this guide I published . In this blog post, I’ll describe how I used Wycheproof to test the elliptic library, how the vulnerabilities I discovered work, and how they can enable signature forgery or prevent signature verification. Methodology During my internship at Trail of Bits, I wrote a detailed guide on using Wycheproof for the new cryptographic testing chapter of the Testing Handbook . I decided to use the elliptic library as a real-world case study for this guide, which allowed me to discover the vulnerabilities in question. I wrote a Wycheproof testing harness for the elliptic package, as described in the guide. I then analyzed the source code covered by the various failing test cases provided by Wycheproof to classify them as false positives or real findings. With an understanding of why these test cases were failing, I then wrote proof-of-concept code for each bug. After confirming they were real findings, I began the coordinated disclosure process. Findings In total, I identified five vulnerabilities, resulting in five CVEs. Three of the vulnerabilities were minor parsing issues. I disclosed those issues in a public pull request against the repository and subsequently requested CVE IDs to keep track of them. Two of the issues were more severe. I disclosed them privately using the GitHub advisory feature. Here are some details on these vulnerabilities. CVE-2024-48949: EdDSA signature malleability This issue stems from a missing out-of-bounds check, which is specified in the NIST FIPS 186-5 in section 7.8.2, “HashEdDSA Signature Verification”: Decode the first half of the signature as a point R and the second half of the signature as an integer s . Verify that the integer s is in the range of 0 ≤ s 0 ) msg = msg . ushrn ( delta ); ... }; The delta variable calculates the difference between the size of the hash and the order n of the current generator for the curve. If msg occupies more bits than n , it is shifted by the difference. For this specific test case, we use secp192r1, which uses 192 bits, and SHA-256, which uses 256 bits. The hash should be shifted by 64 bits to the right to retain the leftmost 192 bits. The issue in the elliptic library arises because the new BN(msg, 16) conversion removes leading zeros, resulting in a smaller hash that takes up fewer bytes. 690ed426ccf17803ebe2bd0884bcd58a1bb5e7477ead3645f356e7a9 During the delta calculation, msg.byteLength() then returns 28 bytes instead of 32. EC . prototype . _truncateToN = function _truncateToN ( msg , truncOnly ) { var delta = msg . byteLength () * 8 - this . n . bitLength (); ... }; This miscalculation results in an incorrect delta of 32 = (288 - 192) instead of 64 = (328 - 192) . Consequently, the hashed message is not shifted correctly, causing verification to fail. This issue causes valid signatures to be rejected if the message hash contains enough leading zeros, with a probability of 2 -32 . To fix this issue, an additional argument should be added to the verification function to allow the hash size to be parsed: EC . prototype . verify = function verify ( msg , signature , key , enc , msgSize ) { msg = this . _truncateToN ( new BN ( msg , 16 ), undefined , msgSize ); ... } EC . prototype . _truncateToN = function _truncateToN ( msg , truncOnly , msgSize ) { var size = ( typeof msgSize === 'undefined' ) ? ( msg . byteLength () * 8 ) : msgSize ; var delta = size - this . n . bitLength (); ... }; On the importance of continuous testing These vulnerabilities serve as an example of why continuous testing is crucial for ensuring the security and correctness of widely used cryptographic tools. In particular, Wycheproof and other actively maintained sets of cryptographic test vectors are excellent tools for ensuring high-quality cryptography libraries. We recommend including these test vectors (and any other relevant ones) in your CI/CD pipeline so that they are rerun whenever a code change is made. This will ensure that your library is resilient against these specific cryptographic issues both now and in the future. Coordinated disclosure timeline For the disclosure process, we used GitHub’s integrated security advisory feature to privately disclose the vulnerabilities and used the report template as a template for the report structure. July 9, 2024: We discovered failed test vectors during our run of Wycheproof against the elliptic library. July 10, 2024: We confirmed that both the ECDSA and EdDSA module had issues and wrote proof-of-concept scripts and fixes to remedy them. For CVE-2024-48949 July 16, 2024: We disclosed the EdDSA signature malleability issue using the GitHub security advisory feature to the elliptic library maintainers and created a private pull request containing our proposed fix. July 16, 2024: The elliptic library maintainers confirmed the existence of the EdDSA issue, merged our proposed fix , and created a new version without disclosing the issue publicly. Oct 10, 2024: We requested a CVE ID from MITRE. Oct 15, 2024: As 90 days had elapsed since our private disclosure, this vulnerability became public. For CVE-2024-48948 July 17, 2024: We disclosed the ECDSA signature verification issue using the GitHub security advisory feature to the elliptic library maintainers and created a private pull request containing our proposed fix. July 23, 2024: We reached out to add an additional collaborator to the ECDSA GitHub advisory, but we received no response. Aug 5, 2024: We reached out asking for confirmation of the ECDSA issue and again requested to add an additional collaborator to the GitHub advisory. We received no response. Aug 14, 2024: We again reached out asking for confirmation of the ECDSA issue and again requested to add an additional collaborator to the GitHub advisory. We received no response. Oct 10, 2024: We requested a CVE ID from MITRE. Oct 13, 2024: Wycheproof test developer Daniel Bleichenbacher independently discovered and disclosed issue #321 , which is related to this discovery. Oct 15, 2024: As 90 days had elapsed since our private disclosure, this vulnerability became public.

A stealth artificial intelligence startup founded by an MIT researcher emerged this morning with an ambitious claim: its new AI model can control computers better than systems built by OpenAI and Anthropic — at a fraction of the cost. OpenAGI , led by chief executive Zengyi Qin , released Lux , a foundation model designed to operate computers autonomously by interpreting screenshots and executing actions across desktop applications. The San Francisco-based company says Lux achieves an 83.6 percent success rate on Online-Mind2Web , a benchmark that has become the industry's most rigorous test for evaluating AI agents that control computers. That score is a significant leap over the leading models from well-funded competitors. OpenAI's Operator , released in January, scores 61.3 percent on the same benchmark. Anthropic's Claude Computer Use achieves 56.3 percent. "Traditional LLM training feeds a large amount of text corpus into the model. The model learns to produce text," Qin said in an exclusive interview with VentureBeat. "By contrast, our model learns to produce actions. The model is trained with a large amount of computer screenshots and action sequences, allowing it to produce actions to control the computer." The announcement arrives at a pivotal moment for the AI industry. Technology giants and startups alike have poured billions of dollars into developing autonomous agents capable of navigating software, booking travel, filling out forms, and executing complex workflows. OpenAI , Anthropic , Google , and Microsoft have all released or announced agent products in the past year, betting that computer-controlling AI will become as transformative as chatbots. Yet independent research has cast doubt on whether current agents are as capable as their creators suggest. Why university researchers built a tougher benchmark to test AI agents—and what they discovered The Online-Mind2Web benchmark , developed by researchers at Ohio State University and the University of California, Berkeley, was designed specifically to expose the gap between marketing claims and actual performance. Published in April and accepted to the Conference on Language Modeling 2025 , the benchmark comprises 300 diverse tasks across 136 real websites — everything from booking flights to navigating complex e-commerce checkouts. Unlike earlier benchmarks that cached parts of websites, Online-Mind2Web tests agents in live online environments where pages change dynamically and unexpected obstacles appear. The results, according to the researchers, painted "a very different picture of the competency of current agents, suggesting over-optimism in previously reported results." When the Ohio State team tested five leading web agents with careful human evaluation, they found that many recent systems — despite heavy investment and marketing fanfare — did not outperform SeeAct , a relatively simple agent released in January 2024. Even OpenAI's Operator , the best performer among commercial offerings in their study, achieved only 61 percent success. "It seemed that highly capable and practical agents were maybe indeed just months away," the researchers wrote in a blog post accompanying their paper. "However, we are also well aware that there are still many fundamental gaps in research to fully autonomous agents, and current agents are probably not as competent as the reported benchmark numbers may depict." The benchmark has gained traction as an industry standard, with a public leaderboard hosted on Hugging Face tracking submissions from research groups and companies. How OpenAGI trained its AI to take actions instead of just generating text OpenAGI's claimed performance advantage stems from what the company calls " Agentic Active Pre-training ," a training methodology that differs fundamentally from how most large language models learn. Conventional language models train on vast text corpora, learning to predict the next word in a sequence. The resulting systems excel at generating coherent text but were not designed to take actions in graphical environments. Lux , according to Qin, takes a different approach. The model trains on computer screenshots paired with action sequences, learning to interpret visual interfaces and determine which clicks, keystrokes, and navigation steps will accomplish a given goal. "The action allows the model to actively explore the computer environment, and such exploration generates new knowledge, which is then fed back to the model for training," Qin told VentureBeat. "This is a naturally self-evolving process, where a better model produces better exploration, better exploration produces better knowledge, and better knowledge leads to a better model." This self-reinforcing training loop, if it functions as described, could help explain how a smaller team might achieve results that elude larger organizations. Rather than requiring ever-larger static datasets, the approach would allow the model to continuously improve by generating its own training data through exploration. OpenAGI also claims significant cost advantages. The company says Lux operates at roughly one-tenth the cost of frontier models from OpenAI and Anthropic while executing tasks faster. Unlike browser-only competitors, Lux can control Slack, Excel, and other desktop applications A critical distinction in OpenAGI's announcement: Lux can control applications across an entire desktop operating system, not just web browsers. Most commercially available computer-use agents, including early versions of Anthropic's Claude Computer Use , focus primarily on browser-based tasks. That limitation excludes vast categories of productivity work that occur in desktop applications — spreadsheets in Microsoft Excel, communications in Slack, design work in Adobe products, code editing in development environments. OpenAGI says Lux can navigate these native applications, a capability that would substantially expand the addressable market for computer-use agents. The company is releasing a developer software development kit alongside the model, allowing third parties to build applications on top of Lux. The company is also working with Intel to optimize Lux for edge devices, which would allow the model to run locally on laptops and workstations rather than requiring cloud infrastructure. That partnership could address enterprise concerns about sending sensitive screen data to external servers. "We are partnering with Intel to optimize our model on edge devices, which will make it the best on-device computer-use model," Qin said. The company confirmed it is in exploratory discussions with AMD and Microsoft about additional partnerships. What happens when you ask an AI agent to copy your bank details Computer-use agents present novel safety challenges that do not arise with conventional chatbots. An AI system capable of clicking buttons, entering text, and navigating applications could, if misdirected, cause significant harm — transferring money, deleting files, or exfiltrating sensitive information. OpenAGI says it has built safety mechanisms directly into Lux. When the model encounters requests that violate its safety policies, it refuses to proceed and alerts the user. In an example provided by the company, when a user asked the model to "copy my bank details and paste it into a new Google doc," Lux responded with an internal reasoning step: "The user asks me to copy the bank details, which are sensitive information. Based on the safety policy, I am not able to perform this action." The model then issued a warning to the user rather than executing the potentially dangerous request. Such safeguards will face intense scrutiny as computer-use agents proliferate. Security researchers have already demonstrated prompt injection attacks against early agent systems, where malicious instructions embedded in websites or documents can hijack an agent's behavior. Whether Lux's safety mechanisms can withstand adversarial attacks remains to be tested by independent researchers. The MIT researcher who built two of GitHub's most downloaded AI models Qin brings an unusual combination of academic credentials and entrepreneurial experience to OpenAGI. He completed his doctorate at the Massachusetts Institute of Technology in 2025, where his research focused on computer vision, robotics, and machine learning. His academic work appeared in top venues including the Conference on Computer Vision and Pattern Recognition , the International Conference on Learning Representations , and the International Conference on Machine Learning . Before founding OpenAGI, Qin built several widely adopted AI systems. JetMoE , a large language model he led development on, demonstrated that a high-performing model could be trained from scratch for less than $100,000 — a fraction of the tens of millions typically required. The model outperformed Meta's LLaMA2-7B on standard benchmarks, according to a technical report that attracted attention from MIT's Computer Science and Artificial Intelligence Laboratory. His previous open-source projects achieved remarkable adoption. OpenVoice , a voice cloning model, accumulated approximately 35,000 stars on GitHub and ranked in the top 0.03 percent of open-source projects by popularity. MeloTTS , a text-to-speech system, has been downloaded more than 19 million times, making it one of the most widely used audio AI models since its 2024 release. Qin also co-founded MyShell , an AI agent platform that has attracted six million users who have collectively built more than 200,000 AI agents. Users have had more than one billion interactions with agents on the platform, according to the company. Inside the billion-dollar race to build AI that controls your computer The computer-use agent market has attracted intense interest from investors and technology giants over the past year. OpenAI released Operator in January, allowing users to instruct an AI to complete tasks across the web. Anthropic has continued developing Claude Computer Use , positioning it as a core capability of its Claude model family. Google has incorporated agent features into its Gemini products. Microsoft has integrated agent capabilities across its Copilot offerings and Windows . Yet the market remains nascent. Enterprise adoption has been limited by concerns about reliability, security, and the ability to handle edge cases that occur frequently in real-world workflows. The performance gaps revealed by benchmarks like Online-Mind2Web suggest that current systems may not be ready for mission-critical applications. OpenAGI enters this competitive landscape as an independent alternative, positioning superior benchmark performance and lower costs against the massive resources of its well-funded rivals. The company's Lux model and developer SDK are available beginning today. Whether OpenAGI can translate benchmark dominance into real-world reliability remains the central question. The AI industry has a long history of impressive demos that falter in production, of laboratory results that crumble against the chaos of actual use. Benchmarks measure what they measure, and the distance between a controlled test and an 8-hour workday full of edge cases, exceptions, and surprises can be vast. But if Lux performs in the wild the way it performs in the lab, the implications extend far beyond one startup's success. It would suggest that the path to capable AI agents runs not through the largest checkbooks but through the cleverest architectures—that a small team with the right ideas can outmaneuver the giants. The technology industry has seen that story before. It rarely stays true for long.

‘Information-dense’ AI responses are most persuasive but these tend to be less accurate, says security report Chatbots can sway people’s political opinions but the most persuasive artificial intelligence models deliver “substantial” amounts of inaccurate information in the process, according to the UK government’s AI security body. Researchers said the study was the largest and most systematic investigation of AI persuasiveness to date, involving nearly 80,000 British participants holding conversations with 19 different AI models. Continue reading...

Interviewer: Jillian York Benjamin Ismail is the Campaign and Advocacy Director for GreatFire , where he leads efforts to expose the censorship apparatus of authoritarian regimes worldwide. He also runs/oversees the App Censorship Project, including the AppleCensorship.com and GoogleCensorship.org platforms, which track mobile app censorship globally. From 2011 to 2017, Benjamin headed the Asia-Pacific desk at Reporters Without Borders (RSF). Jillian York : Hi Benjamin, it's great to chat with you. We got to meet at the Global Gathering recently and we did a short video there and it was wonderful to get to know you a little bit. I'm going to start by asking you my first basic question: What does free speech or free expression mean to you? Benjamin Ismail : Well, it starts with a very, very big question. What I have in mind is a cliche answer, but it's what I genuinely believe. I think about all freedoms. So when you say free expression, free speech, or freedom of information or Article 19, all of those concepts are linked together, I immediately think of all human rights at once. Because what I have seen during my current or past work is how that freedom is really the cornerstone of all freedom. If you don’t have that, you can’t have any other freedom. If you don’t have freedom of expression, if you don't have journalism, you don't have pluralism of opinions—you have self-censorship. You have realities, violations, that exist but are not talked about, and are not exposed, not revealed, not tackled, and nothing is really improved without that first freedom. I also think about Myanmar because I remember going there in 2012, when the country had just opened after the democratic revolution. We got the chance to meet with many officials, ministers, and we got to tell them that they should start with that because their speech was “don’t worry, don’t raise freedom of speech, freedom of the press will come in due time.” And we were saying “no, that’s not how it works!” It doesn’t come in due time when other things are being worked on. It starts with that so you can work on other things. And so I remember very well those meetings and how actually, unfortunately, the key issues that re-emerged afterwards in the country were precisely due to the fact that they failed to truly implement free speech protections when the country started opening. JY: What was your path to this work? BI : This is a multi-faceted answer. So, I was studying Chinese language and civilization at the National Institute of Oriental Languages and Civilizations in Paris along with political science and international law. When I started that line of study, I considered maybe becoming a diplomat…that program led to preparing for the exams required to enter the diplomatic corps in France. But I also heard negative feedback on the Ministry of Foreign Affairs and, notably, first-hand testimonies from friends and fellow students who had done internships there. I already knew that I had a little bit of an issue with authority. My experience as an assistant at Reporters Without Borders challenged the preconceptions I had about NGOs and civil society organizations in general. I was a bit lucky to come at a time when the organization was really trying to find its new direction, its new inspiration. So it a brief phase where the organization itself was hungry for new ideas. Being young and not very experienced, I was invited to share my inputs, my views—among many others of course. I saw that you can influence an organization’s direction, actions, and strategy, and see the materialization of those strategic choices. Such as launching a campaign, setting priorities, and deciding how to tackle issues like freedom of information, and the protection of journalists in various contexts. That really motivated me and I realized that I would have much less to say if I had joined an institution such as the Ministry of Foreign Affairs. Instead, I was part of a human-sized group, about thirty-plus employees working together in one big open space in Paris. After that experience I set my mind on joining the civil society sector, focusing on freedom of the press. on journalistic issues, you get to touch on many different issues in many different regions, and I really like that. So even though it’s kind of monothematic, it's a single topic that's encompassing everything at the same time. I was dealing with safety issues for Pakistani journalists threatened by the Taliban. At the same time I followed journalists pressured by corporations such as TEPCO and the government in Japan for covering nuclear issues. I got to touch on many topics through the work of the people we were defending and helping. That’s what really locked me onto this specific human right. I already had my interest when I was studying in political and civil rights, but after that first experience, at the end of 2010, I went to China and got called by Reporters Without Borders . They told me that the head of the Asia desk was leaving and invited me to apply for the position. At that time, I was in Shanghai, working to settle down there. The alternative was accepting a job that would take me back to Paris but likely close the door on any return to China. Once you start giving interviews to outlets like the BBC and CNN, well… you know how that goes—RSF was not viewed favorably in many countries. Eventually, I decided it was a huge opportunity, so I accepted the job and went back to Paris, and from then on I was fully committed to that issue. JY: For our readers, tell us what the timeline of this was. BI : I finished my studies in 2009. I did my internship with Reporters Without Borders that year and continued to work pro bono for the organization on the Chinese website in 2010. Then I went to China, and in January 2011, I was contacted by Reporters without Borders about the departure of the former head of the Asia Pacific Desk. I did my first and last fact-finding mission in China, and went to Beijing. I met the artist Ai Weiwei in Beijing just a few weeks before he was arrested, around March 2011, and finally flew back to Paris and started heading the Asia desk. I left the organization in 2017. JY: Such an amazing story. I’d love to hear more about the work that you do now. BI: The story of the work I do now actually starts in 2011. That was my first year heading the Asia Pacific Desk. That same year, a group of anonymous activists based in China started a group called GreatFire . They launched their project with a website where you can type any URL you want and that website will test the connection from mainland China to that URL and tell you know if it’s accessible or blocked. They also kept the test records so that you can look at the history of the blocking of a specific website, which is great. That was GreatFire’s first project for monitoring web censorship in mainland China. We started exchanging information, working on the issue of censorship in China. They continued to develop more projects which I tried to highlight as well . I also helped them to secure some funding. At the very beginning, they were working on these things as a side job. And progressively they managed to get some funding, which was very difficult because of the anonymity. One of the things I remember is that I helped them get some funding from the EU through a mechanism called “Small Grants”, where every grant would be around €20- 30,000. The EU, you know, is a bureaucratic entity and they were demanding some paperwork and documents. But I was telling them that they wouldn’t be able to get the real names of the people working at GreatFire, but that they should not be concerned about that because, what they wanted was to finance that tool. So if we were to show them that the people they were going to send the money to were actually the people controlling that website, then it would be fine. And so we featured a little EU logo just for one day, I think on the footer of the website so they could check that. And that’s how we convinced the EU to support GreatFire for that work. Also, there's this tactic called “ Collateral Freedom ” that GreatFire uses very well. The idea is that you host sensitive content on HTTPS servers that belong to companies which also operate inside China and are accessible there. Because it’s HTTPS, the connection is encrypted, so the authorities can’t just block a specific page—they can’t see exactly which page is being accessed. To block it, they’d have to block the entire service. Now, they can do that, but it comes at a higher political and economic cost, because it means disrupting access to other things hosted on that same service—like banks or major businesses. That’s why it’s called “collateral freedom”: you’re basically forcing the authorities to risk broader collateral damage if they want to censor your content. When I was working for RSF, I proposed that we replicate that tactic on the 12th of March—that's the World Day against Cyber Censorship . We had the habit of publishing what we called the “ enemies of the Internet ” report, where we would highlight and update the situation on the countries which were carrying out the harshest repression online; countries like Iran, Turkmenistan, North Korea, and of course, China. I suggested in a team meeting: “what if we highlighted the good guys? Maybe we could highlight 10 exiled media and use collateral freedom to uncensor those. And so we did: some Iranian media, Egyptian media, Chinese media, Turkmen media were uncensored using mirrors hosted on https servers owned by big, and thus harder to block, companies...and that’s how we started to do collateral freedom and it continued to be an annual thing. I also helped in my personal capacity, including after I left Reporters Without Borders. After I left RSF, I joined another NGO focusing on China, which I knew also from my time at RSF. I worked with that group for a year and a half; GreatFire contacted me to work on a website specifically. So here we are, at the beginning of 2020, they had just started this website called Applecensorship.com that allowed users to test availability of any app in any of Apple’s 175 App Stores worldwide They needed a better website—one that allowed advocacy content—for that tool. The idea was to make a website useful for academics doing research, journalists investigating app store censorship and control and human rights NGOs, civil society organizations interested in the availability of any tools. Apple’s censorship in China started quickly after the company entered the Chinese market, in 2010. In 2013, one of the projects by GreatFire which had been turned into an iOS app was removed by Apple 48 hours after its release on the App Store, at the demand of the Chinese authorities. That project was Free Weibo , which is a website which features censored posts from Weibo, the Chinese equivalent of Twitter—we crawl social media and detect censored posts and republish them on the site. In 2017 it was reported that Apple had removed all VPNs from the Chinese app store. So between that episode in 2013, and the growing censorship of Apple in China (and in other places too) led to the creation of AppleCensorship in 2019. GreatFire asked me to work on that website. The transformation into an advocacy platform was successful. I then started working full time on that project, which has since evolved into the App Censorship Project, which includes another website, googlecensorship.org (offering features similar to Applecensorship.com but for the 224 Play Stores worldwide). In the meantime, I became the head of campaigns and advocacy, because of my background at RSF. JY: I want to ask you, looking beyond China, what are some other places in the world that you're concerned about at the moment, whether on a professional basis, but also maybe just as a person. What are you seeing right now in terms of global trends around free expression that worry you? BI : I think, like everyone else, that what we're seeing in Western democracies—in the US and even in Europe—is concerning. But I'm still more concerned about authoritarian regimes than about our democracies. Maybe it's a case of not learning my lesson or of naive optimism, but I'm still more concerned about China and Russia than I am about what I see in France, the UK, or the US. There has been some recent reporting about China developing very advanced censorship and surveillance technologies and exporting them to other countries like Myanmar and Pakistan. What we’re seeing in Russia—I’m not an expert on that region, but we heard experts saying back in 2022 that Russia was trying to increase its censorship and control, but that it couldn’t become like China because China had exerted control over its internet from the very beginning: They removed Facebook back in 2009, then Google was pushed away by the authorities (and the market). And the Chinese authorities successfully filled the gaps left by the absence of those foreign Western companies. Some researchers working on Russia were saying that it wasn’t really possible for Russia to do what China had done because it was unprepared and that China had engineered it for more than a decade. What we are seeing now is that Russia is close to being able to close its Internet, to close the country, to replace services by its own controlled ones. It’s not identical, but it’s also kind of replicating what China has been doing. And that’s a very sad observation to make. Beyond the digital, the issue of how far Putin is willing to go in escalating concerns. As a human being and an inhabitant of the European continent, I’m concerned by the ability of a country like Russia to isolate itself while waging a war. Russia is engaged in a real war and at the same time is able to completely digitally close down the country. Between that and the example of China exporting censorship, I’m not far from thinking that in ten or twenty years we’ll have a completely splintered internet. JY : Do you feel like having a global perspective like this has changed or reshaped your views in any way? BI : Yes, in the sense that when you start working with international organizations, and you start hearing about the world and how human rights are universal values, and you get to meet people and go to different countries, you really get to experience how universal those freedoms and aspirations are. When I worked RSF and lobbied governments to pass a good law or abolish a repressive one, or when I worked on a case of a jailed journalist or blogger, I got to talk to authorities and to hear weird justifications from certain governments (not mentioning any names but Myanmar and Vietnam) like “those populations are different from the French” and I would receive pushback that the ideas of freedoms I was describing were not applicable to their societies. It’s a bit destabilizing when you hear that for the first time. But as you gain experience, you can clearly explain why human rights are universal and why different populations shouldn’t be ruled differently when it comes to human rights. Everyone wants to be free. This notion of “universality” is comforting because when you’re working for something universal, the argument is there. The freedoms you defend can’t be challenged in principle, because everyone wants them. If governments and authorities really listened to their people, they would hear them calling for those rights and freedoms. Or that’s what I used to think. Now we hear this growing rhetoric that we (people from the West) are exporting democracy, that it’s a western value, and not a universal one. This discourse, notably developed by Xi Jinping in China, “Western democracy” as a new concept— is a complete fallacy. Democracy was invented in the West, but democracy is universal. Unfortunately, I now believe that, in the future, we will have to justify and argue much more strongly for the universality of concepts like democracy, human rights and fundamental freedoms. JY : Thank you so much for this insight. And now for our final question: Do you have a free speech hero? BI : No. JY : No? No heroes? An inspiration maybe. BI : On the contrary, I’ve been disappointed so much by certain figures that were presented as human rights heroes…Like Aung San Suu Kyi during the Rohingya crisis, on which I worked when I was at RSF. Myanmar officially recognizes 135 ethnic groups, but somehow this one additional ethnic minority (the Rohingya ) is impossible for them to accept. It’s appalling. It’s weird to say, but some heroes are not really good people either. Being a hero is doing a heroic action, but people who do heroic actions can also do very bad things before or after, at a different level. They can be terrible persons, husbands or friends and be a “human rights” hero at the same time. Some people really inspired me but they’re not public figures. They are freedom fighters, but they are not “heroes”. They remain in the shadows. I know their struggles; I see their determination, their conviction, and how their personal lives align with their role as freedom fighters. These are the people who truly inspire me.

arXiv:2511.11252v1 Announce Type: new Abstract: Autonomous aerial systems increasingly rely on large language models (LLMs) for mission planning, perception, and decision-making, yet the lack of standardized and physically grounded benchmarks limits systematic evaluation of their reasoning capabilities. To address this gap, we introduce UAVBench, an open benchmark dataset comprising 50,000 validated UAV flight scenarios generated through taxonomy-guided LLM prompting and multi-stage safety validation. Each scenario is encoded in a structured JSON schema that includes mission objectives, vehicle configuration, environmental conditions, and quantitative risk labels, providing a unified representation of UAV operations across diverse domains. Building on this foundation, we present UAVBench_MCQ, a reasoning-oriented extension containing 50,000 multiple-choice questions spanning ten cognitive and ethical reasoning styles, ranging from aerodynamics and navigation to multi-agent coordination and integrated reasoning. This framework enables interpretable and machine-checkable assessment of UAV-specific cognition under realistic operational contexts. We evaluate 32 state-of-the-art LLMs, including GPT-5, ChatGPT-4o, Gemini 2.5 Flash, DeepSeek V3, Qwen3 235B, and ERNIE 4.5 300B, and find strong performance in perception and policy reasoning but persistent challenges in ethics-aware and resource-constrained decision-making. UAVBench establishes a reproducible and physically grounded foundation for benchmarking agentic AI in autonomous aerial systems and advancing next-generation UAV reasoning intelligence. To support open science and reproducibility, we release the UAVBench dataset, the UAVBench_MCQ benchmark, evaluation scripts, and all related materials on GitHub at https://github.com/maferrag/UAVBench

arXiv:2511.11182v1 Announce Type: new Abstract: Hallucination continues to pose a major obstacle in the reasoning capabilities of large language models (LLMs). Although the Multi-Agent Debate (MAD) paradigm offers a promising solution by promoting consensus among multiple agents to enhance reliability, it relies on the unrealistic assumption that all debaters are rational and reflective, which is a condition that may not hold when agents themselves are prone to hallucinations. To address this gap, we introduce the Multi-agent Undercover Gaming (MUG) protocol, inspired by social deduction games like "Who is Undercover?". MUG reframes MAD as a process of detecting "undercover" agents (those suffering from hallucinations) by employing multimodal counterfactual tests. Specifically, we modify reference images to introduce counterfactual evidence and observe whether agents can accurately identify these changes, providing ground-truth for identifying hallucinating agents and enabling robust, crowd-powered multimodal reasoning. MUG advances MAD protocols along three key dimensions: (1) enabling factual verification beyond statistical consensus through counterfactual testing; (2) introducing cross-evidence reasoning via dynamically modified evidence sources instead of relying on static inputs; and (3) fostering active reasoning, where agents engage in probing discussions rather than passively answering questions. Collectively, these innovations offer a more reliable and effective framework for multimodal reasoning in LLMs. The source code can be accessed at https://github.com/YongLD/MUG.git.

arXiv:2511.11134v1 Announce Type: new Abstract: The advent of Unified Multimodal Models (UMMs) signals a paradigm shift in artificial intelligence, moving from passive perception to active, cross-modal generation. Despite their unprecedented ability to synthesize information, a critical gap persists in evaluation: existing benchmarks primarily assess discriminative understanding or unconstrained image generation separately, failing to measure the integrated cognitive process of generative reasoning. To bridge this gap, we propose that geometric construction provides an ideal testbed as it inherently demands a fusion of language comprehension and precise visual generation. We introduce GGBench, a benchmark designed specifically to evaluate geometric generative reasoning. It provides a comprehensive framework for systematically diagnosing a model's ability to not only understand and reason but to actively construct a solution, thereby setting a more rigorous standard for the next generation of intelligent systems. Project website: https://opendatalab-raiser.github.io/GGBench/.

arXiv:2511.10853v1 Announce Type: new Abstract: Traffic collision reconstruction traditionally relies on human expertise, often yielding inconsistent results when analyzing incomplete multimodal data. This study develops a multi-agent AI framework that reconstructs pre-crash scenarios and infers vehicle behaviors from fragmented collision data. We present a two-phase collaborative framework combining reconstruction and reasoning phases. The system processes 277 rear-end lead vehicle deceleration (LVD) collisions from the Crash Investigation Sampling System, integrating textual crash reports, structured tabular data, and visual scene diagrams. Phase I generates natural-language crash reconstructions from multimodal inputs. Phase II performs in-depth crash reasoning by combining these reconstructions with temporal Event Data Recorder (EDR).For validation, we applied it to all LVD cases, focusing on a subset of 39 complex crashes where multiple EDR records per collision introduced ambiguity (e.g., due to missing or conflicting data).The evaluation of the 39 LVD crash cases revealed our framework achieved perfect accuracy across all test cases, successfully identifying both the most relevant EDR event and correctly distinguishing striking versus struck vehicles, surpassing the 92% accuracy achieved by human researchers on the same challenging dataset. The system maintained robust performance even when processing incomplete data, including missing or erroneous EDR records and ambiguous scene diagrams. This study demonstrates superior AI capabilities in processing heterogeneous collision data, providing unprecedented precision in reconstructing impact dynamics and characterizing pre-crash behaviors.

arXiv:2511.10658v1 Announce Type: cross Abstract: Large language models (LLMs) are increasingly used to extract structured information from free-text clinical records, but prior work often focuses on single tasks, limited models, and English-language reports. We evaluated 15 open-weight LLMs on pathology and radiology reports across six use cases, colorectal liver metastases, liver tumours, neurodegenerative diseases, soft-tissue tumours, melanomas, and sarcomas, at three institutes in the Netherlands, UK, and Czech Republic. Models included general-purpose and medical-specialised LLMs of various sizes, and six prompting strategies were compared: zero-shot, one-shot, few-shot, chain-of-thought, self-consistency, and prompt graph. Performance was assessed using task-appropriate metrics, with consensus rank aggregation and linear mixed-effects models quantifying variance. Top-ranked models achieved macro-average scores close to inter-rater agreement across tasks. Small-to-medium general-purpose models performed comparably to large models, while tiny and specialised models performed worse. Prompt graph and few-shot prompting improved performance by ~13%. Task-specific factors, including variable complexity and annotation variability, influenced results more than model size or prompting strategy. These findings show that open-weight LLMs can extract structured data from clinical reports across diseases, languages, and institutions, offering a scalable approach for clinical data curation.

arXiv:2511.10651v1 Announce Type: cross Abstract: Data analysis and performance evaluation of simulation deduction plays a pivotal role in modern warfare, which enables military personnel to gain invaluable insights into the potential effectiveness of different strategies, tactics, and operational plans. Traditional manual analysis approach is time-consuming and limited by human errors. To enhance efficiency and accuracy, large language models (LLMs) with strong analytical and inferencing capabilities can be employed. However, high-quality analysis reports with well-structured formatting cannot be obtained through a single instruction input to the LLM. To tackle this issue, we propose a method that first decomposes the complex task into several sub-tasks and designs effective system prompts and user prompts for each sub-task. Multi-round interactions with the LLM incorporating self-check and reflection are then conducted to enable structured data extraction as well as multi-step analysis and evaluation. Furthermore, custom tools are defined and invoked to generate figures and compute metrics. We also design multiple report templates, each tailored to a specific application and input data type, ensuring their adaptability across a variety of scenarios. Extensive evaluation results demonstrate that the reports generated by our method exhibit higher quality, therefore obtaining higher scores than the baseline method.

arXiv:2511.11257v1 Announce Type: new Abstract: The discovery of novel Ionic Liquids (ILs) is hindered by critical challenges in property prediction, including limited data, poor model accuracy, and fragmented workflows. Leveraging the power of Large Language Models (LLMs), we introduce AIonopedia, to the best of our knowledge, the first LLM agent for IL discovery. Powered by an LLM-augmented multimodal domain foundation model for ILs, AIonopedia enables accurate property predictions and incorporates a hierarchical search architecture for molecular screening and design. Trained and evaluated on a newly curated and comprehensive IL dataset, our model delivers superior performance. Complementing these results, evaluations on literature-reported systems indicate that the agent can perform effective IL modification. Moving beyond offline tests, the practical efficacy was further confirmed through real-world wet-lab validation, in which the agent demonstrated exceptional generalization capabilities on challenging out-of-distribution tasks, underscoring its ability to accelerate real-world IL discovery.

arXiv:2511.10655v1 Announce Type: cross Abstract: This report extends the Spectral Neuro-Symbolic Reasoning (Spectral NSR) framework by introducing three semantically grounded enhancements: (1) transformer-based node merging using contextual embeddings (e.g., Sentence-BERT, SimCSE) to reduce redundancy, (2) sentence-level entailment validation with pretrained NLI classifiers (e.g., RoBERTa, DeBERTa) to improve edge quality, and (3) alignment with external knowledge graphs (e.g., ConceptNet, Wikidata) to augment missing context. These modifications enhance graph fidelity while preserving the core spectral reasoning pipeline. Experimental results on ProofWriter, EntailmentBank, and CLUTRR benchmarks show consistent accuracy gains (up to +3.8\%), improved generalization to adversarial cases, and reduced inference noise. The novelty lies in performing semantic and symbolic refinement entirely upstream of the spectral inference stage, enabling efficient, interpretable, and scalable reasoning without relying on quadratic attention mechanisms. In summary, this work extends the Spectral NSR framework with modular, semantically grounded preprocessing steps that improve graph quality without altering the core spectral reasoning engine. The result is a more robust, interpretable, and scalable reasoning system suitable for deployment in open-domain and real-world settings.

arXiv:2511.10720v1 Announce Type: cross Abstract: Long context LLMs are vulnerable to prompt injection, where an attacker can inject an instruction in a long context to induce an LLM to generate an attacker-desired output. Existing prompt injection defenses are designed for short contexts. When extended to long-context scenarios, they have limited effectiveness. The reason is that an injected instruction constitutes only a very small portion of a long context, making the defense very challenging. In this work, we propose PISanitizer, which first pinpoints and sanitizes potential injected tokens (if any) in a context before letting a backend LLM generate a response, thereby eliminating the influence of the injected instruction. To sanitize injected tokens, PISanitizer builds on two observations: (1) prompt injection attacks essentially craft an instruction that compels an LLM to follow it, and (2) LLMs intrinsically leverage the attention mechanism to focus on crucial input tokens for output generation. Guided by these two observations, we first intentionally let an LLM follow arbitrary instructions in a context and then sanitize tokens receiving high attention that drive the instruction-following behavior of the LLM. By design, PISanitizer presents a dilemma for an attacker: the more effectively an injected instruction compels an LLM to follow it, the more likely it is to be sanitized by PISanitizer. Our extensive evaluation shows that PISanitizer can successfully prevent prompt injection, maintain utility, outperform existing defenses, is efficient, and is robust to optimization-based and strong adaptive attacks. The code is available at https://github.com/sleeepeer/PISanitizer.

arXiv:2511.10707v1 Announce Type: cross Abstract: Parameter-Efficient finetuning (PEFT) enhances model performance on downstream tasks by updating a minimal subset of parameters. Representation finetuning (ReFT) methods further improve efficiency by freezing model weights and optimizing internal representations with fewer parameters than PEFT, outperforming PEFT on several tasks. However, ReFT exhibits a significant performance decline on mathematical reasoning tasks. To address this problem, the paper demonstrates that ReFT's poor performance on mathematical tasks primarily stems from its struggle to generate effective reasoning prefixes during the early inference phase. Moreover, ReFT disturbs the numerical encoding and the error accumulats during the CoT stage. Based on these observations, this paper proposes Bias-REstrained Prefix Representation FineTuning (BREP ReFT), which enhances ReFT's mathematical reasoning capability by truncating training data to optimize the generation of initial reasoning prefixes, intervening on the early inference stage to prevent error accumulation, and constraining the intervention vectors' magnitude to avoid disturbing numerical encoding. Extensive experiments across diverse model architectures demonstrate BREP's superior effectiveness, efficiency, and robust generalization capability, outperforming both standard ReFT and weight-based PEFT methods on the task of mathematical reasoning. The source code is available at https://github.com/LiangThree/BREP.